Some Models are Useful

Why build models of malaria? Are malaria models useful for policy?

The appropriate use of mathematical models is nothing more than thinking carefully and quantitatively about the problem at hand.

I believe that models can be useful, but they can also be used badly. To use models well, a malaria program needs to invest in high-quality information systems that are capable of supporting evidence based policies. Since malaria programs need these information systems anyway, malaria programs ought to be doing some simulation-based analytics. Within an evidence-based malaria program, mathematical models can be used to synthesize and analyze information about humans, parasite, mosquitoes, health systems, and vector control programs that are well beyond are innate quantitative capabilities.

Models are Ubiquitous

To understand mathematical modeling, I think it helps to step away from mathematics and malaria and start with a very basic description of how we think.

Information comes to us through our senses, our brains assemble and analyze the information in a representation of reality inside of our brains. We can scarcely function without some sort of representation, and we often trust that representation enough to act on it. Give someone a photograph, and they will do scene analysis in a flash. To think is to build a model. When we think, we are thus constantly building models. Most of the time, that process works just fine, but there is an entire branch of psychology devoted to studying errors in perception.

There are natural limits to our ability to think. In some cases, we turn to devices that extend our cognitive abilities to assemble and transmit information, such as mathematical models or road maps. In some ways, a model works like a road map, in which essential information about location is abstracted and miniaturized. Information, visual cues, and their spatial relationships are represented in a miniature form in to help us find our way around places. Good maps are accurate enough that you can find your way around a place you’ve never been.

Like a map, a mathematical problem is a device that helps us organize and analyze complex, quantitative information. While models are ubiquitous, mathematical models are rare. It takes some skill to learn how build and interpret a model.

Models are Approximations

Ross’s models of malaria are a good starting point for understanding how malaria works in populations, but they are not realistic enough to do much more than teach us the basics. Ross’s models lack structure to handle features such as seasonality, spatial dynamics, malaria importation, immunity, disease, demography, or malaria control. Ross was a starting point: the simple model created a scaffolding that we use to think about all these factors, but only if we break it.

If we want to do anything useful with a model, we must not treat it with reverence. If we want to use models properly, we must start to understand the model as an approximation of a very messy process. It should be scrutinized and pressure tested. The model might not be useful.

It is also important that a mathematical model can be pressure tested in a way that is not possible with models that are never defined with any rigor. In doing all this, we must remember that a model is a repreentation of reality. Since reality is very messy, complex, and stochastic, models are approximations.

A famous aphorism All models are wrong, but some of them are useful. is often attributed to George Box. In his research, Box used mathematical models to help him understand complex phenomena. He was interested in the question of robustness. His approach – practical, iterative model building – is a model for malaria. The point of a model is to get the gist of a problem, even if we are missing some of the information we would need to fully describe it quantitatively. The question was how we could avoid getting it wrong. That quote does not appear anywhere in print, but he did say:

Remember that all models are wrong; the practical question is how wrong do they have to be to not be useful. [1] In taking on the question of how to use models properly, we find some wonderful metaphors in arts and literature.

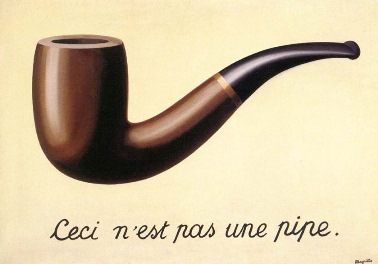

It is not an easy thing to build and test a model, and in getting the model to work, we run the danger of believing the model. In the 1929 painting by Belgian surrealist painter René Magritte called “The Treachery of Images.” In an image of a pipe, we read this is not a pipe.

A model of malaria is not malaria. It is merely a device we use to help us reason. The question is how we could be getting it wrong.

Realism and Parsimony

If we want to get it right, we should spend most of our time thinking about how we might get it wrong. A practical challenge is that a model often represents a small part of the processs. To imagine how we might get it wrong, we should probably try to wrap our minds around the whole process. We want tosystematically characterize and quantify uncertainty systematically, and when we pass on advice, we should have tried to propagate that uncertainty through our analysis. This is the essence of what we’ve called Robust Analytics for Malaria Policy, or RAMP.

In his short story, “On Rigor in Science,” Jorge Luis Borges describes an empire where the cartographers create a detailed map of the empire that is the same size as the empire. That is probably not useful, but if we want to do robust analytics, we need the models to be realistic enough to address the problem at hand, including failure modes. We don’t want to worry about everything, but we need to get the gist of a problem.

A quote that is often attributed to Einstein:

A model should be as simple as possible, but no simpler.

While it’s not clear if Einstein ever actually said it, the sentiment concisely captures the spirit of scientific inference. The idea was called Occam’s Razor: in a sea of competing explanations, the simple ones tend to be correct, so we should shave away all that is unnecessary. The idea is encapsulated in parsimony. To be parsimonious literally means to be cheap. In scientific inference, parsimony is a way of describing tradeoffs between bias and variance: models that are too stiff to get it right because they are simple and models that convey less information because the confidence limits on the parameters are too large to be useful.

In policy, it’s not a battle of the good simple vs. the evil of complexity in detail. It is, instead, a struggle we must engage in to identify those details that are most relevant. If we want to shave with Occam’s Razor in policy, we will often need to ask questions about hypothetical situations that could not possibly rely on data because nothing like it has ever been tried.

As we step into malaria policy, we are often asked to weigh in on questions that would not bear scrutiny. If we don’t offer advice based on the best available evidence, even if it is weak, then the policy will move be developed without any evidence. In these situations, models can be used in a structured way to explore the uncertainty. What don’t we know and how could we get it wrong? Perhaps more importantly, what are some practical ways of learning what we need to know? Among all the things we don’t know, what knowledge or data gaps should we try and fill first?

The emphasis is on understanding failure modes, and in trying to control or understand something as complex as malaria, there is a lot that could go wrong.

Simulacra

The absurd full-sized map made by imaginary cartographers in a fictitious country has lived on. Borges story has been referenced in novels and philosophical works since then, including Baudrillard’s Simulacra and Simulation. Baudrillard is not a scientist, and his book has rarely been used to critique science, but in building and applying models of malaria, his critique has always resonated:

The simulacrum is never that which conceals the truth—it is the truth which conceals that there is none. The simulacrum is true.

There is something about the denizens of the Ivory Tower that makes them susceptible to simulacra. Similarly, Baudrillard’s critique is a useful way of framing a critique of malaria modeling over that past 120 years. Baudrillard’s identified four stages: 1) sacramental order; 2) perversion of reality; 3) order of sorcery; and 4) simulacra.

A sacramental order was established when Ronald Ross published his models. In malaria, the ideas and concepts were treated as profound. The models and concepts motivated development of malaria metrics and directed population research in malaria. McKendrick’s analysis gave some a probabilistic foundation for Ross’s deterministic models. Alfred Lotka, a mathematician, wrote an extensive analysis of the model.

The perversion of reality took place over several decades as Ross’s model and ideas were put into practice. Despite all the attention, the underlying assumptions of that model were not seriously called into question in the theoretical work. In 1975, Macdonald wrote:

The mathematical studies of Ross appeared an attactive approach to new explanation, but experiment showed they did not complete the picture or provide explanation…

The models had all made the same set of simplifying assumptions. When compared with reality, the shortcomings undermined faith in the modeling. This was the perversion of reality.

Macdonald played a key role in the next phase, establishing the order of sorcery. Among his publications in the 1950s, three of them provided a synthesis of malaria data up to that point on malaria epidemiology [2], malaria immunity [3], and medical entomology [4]. In 1950, George Macdonald published a model of superinfection that questioned one of the the underlying assumptions in Ross’s model. Then, in 1952, Macdonald papers showed mathematically that malaria transmission was highly sensitive to adult mosquito mortality rates [4], and he published a formula for the basic reproductive number for malaria [5]. In one sense, Macdonald’s papers appeared to re-established the sacramental order, but many of the same issues that had undermined faith in the models remained unresolved. Meanwhile the Global Malaria Eradication Programme was moving forward under the leadership of Fred Soper [6]. Macdonald’s math played an important role, backstopping some open questions, and providing a rationale for eradication [7–10]. The models had not substantially addressed most of the major failings, but they did breathe new life.

Under the guise of global policy, the models became simulacra. The ideas were integrated into official documents and action plans. The models are used to shape research agendas and advocacy messaging. General theory gets used to direct malaria policy and resources, with minimal information about reality and largely without evaluation.

In some ways, Ross himself initiated the second stage when described features of malaria that were missing from simple models. In the academic tradition, some of these concerns were eventually addressed by new models, but those models appeared decades after. After each advance, the new models re-establish a new sacramental order.

Research and policy operates under a set of assumptions based on current malaria theory. The qualitative analysis of the simple models is passed on as truth, largely without integrating ideas about the model limitations. The models may be wrong, but since there is an urgent need for information and something needs to be done, the models deemed good enough. Comparison’s to reality are no longer of interest. To resolve issues, debates are settled by international normative bodies and codified in official documents. Objections about complexity and accuracy are criticized as being unrealistic, or out of touch, or impractical.

Scalable Complexity

A challenge for malaria modeling is not simplification but elaboration. The starting point for model building – a Ross-Macdonald Model – has only got the basics.

Complex Adaptive Systems

Malaria is complex and messy, but our models don’t need to get every feature of malaria right. There’s a trap in chasing complexity and realism too far. All models are approximations, and we just need to get the gist of it, We probably don’t need to know everything to make good decisions, and we will rarely be able to offer a high degree of certainty. We don’t need a perfect model, but it should be pretty damned good. To develop robust analytics, it’s important to remember that all models are wrong, but some models are useful. (This statement, often used in one form or another, was paraphrased from George P. Box. This approximates the actual quote, but it’s still useful.)