I will be recruiting an iSchool or CSE Ph.D. student during the Autumn 2026 admissions cycle, to start Autumn 2027. I'm excited to work with students interested in critical, liberatory CS and AI education. Have questions that aren't answered here? Write me.

My research imagines and enables equitable, joyous, liberatory learning

about computing and information, in schools and beyond.

I work with outstanding postdocs, doctoral students, teachers, undergraduates and communities on this vision. My current

projects within this goal are shaped by the faculty, students, and teachers in

the Center for Learning, Computing, and Imagination🔗︎, our partner teachers, school leaders, and families, and generous external funding.

We publish primarily in Computing Education and Human-Computer Interaction🔗︎. I also work to broaden research discourse as Editor-in-Chief of ACM TOCE🔗︎ and sustain peer review with Reciprocal Reviews🔗︎. We share our discoveries by blogging, presenting, teaching, writing, and connecting with community, including the CS for All Washington🔗︎ advocacy community and the PNW CS Teach🔗︎ consortium of teacher educators.

Want to do research with me? Read about my lab,

and join us in creating a more equitable future of computing that includes everyone.

Discoveries 🔗︎

My lab and I have discovered many things since I started doing research in

1999. Here are some of the highlights from our work. How I describe these is

always evolving as we learn more.

Programming languages can be designed to include everyone (2011 — 2026)

Programming languages can embody values of diversity, equity, inclusion, justice, and accessibility, but it requires careful design and attention to ability, culture, language, and more. These papers deconstruct these nuances, while also offering novel designs, uses, and conceptions of programming languages

CS assessments can be biased, but it's hard to know how, why, and what to do about it (2017 — 2026)

Across multiple studies, we have examined the use of psychometrics to understand bias in CS assessments, and contributed new methods and assessments to overcome bias.

Teaching youth about the injustices of computing and society requires teacher transformation and liberatory pedagogies (2020 — 2026)

This work finds that making room for conversations about computing, society, and diversity demands deep respect for students' limiting situations, but also liberatory pedagogies and teacher courage.

Becoming a CS teacher requires equity and resources that CS culture resists (2019 — 2026)

Teachers need time, resources, and community support to teach equitably, but CS culture often resists these needs in favor of individual achievement and technical confidence, structuring who does and does not learn CS.

Teaching CS students how to create inclusive, equitable software requires new pedagogies and methods, but also substantial teacher professional development (2015 — 2026)

These works show that both teachers and learners often resist this topic due to fear of failure, not disinterest.

People's interests and learning of computing are intricately shaped by their social worlds and identities (2009 — 2026)

We have found across a series of reflective studies that students' identities and lived experiences intricately shape their interest and disinterest in computing.

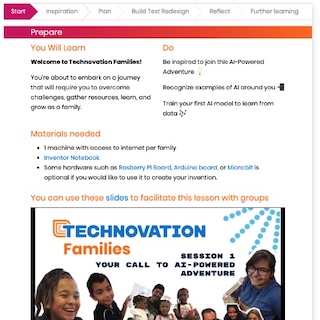

Learning to code as a family can enable rich learning that distrupts intergenerational hierarchies (2018 — 2025)

These papers consider learning about programming and AI in the context of families, and how this differs from individual and classroom contexts.

Understanding machine learning means understanding uncertainty (2005 — 2025)

Tools can help, but even more so, using data and domains that people understand is even better.

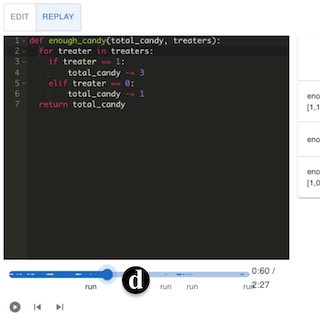

Text-based code editors can be richly interactive (2004 — 2025)

The structured editors of the 1980's were hard to build and use; I invented ways of making both easier by viewing programs as user interfaces, not documents.

The tools and systems around programming languages are a primary source of learning difficulty (2000 — 2025)

Programming is hard for many reasons, but my work showed that it is also hard because tools, APIs, and IDEs make information about program behavior particulary difficulty to find.

Programming problem solving can benefit greatly from guided, step-by-step scaffolding (2015 — 2023)

Most learners don't want to be that deliberate about their process, favoring less effective trial and error strategies. But framing it as aunthetic practice can help.

Software engineering expertise is technical, but also social, organizational, and political (2015 — 2023)

Across thousands of surveys, interviews, and observations, we have found that software development expertise is far more than just knowing how to architect and build software.

Materials for learning CS online largely ignore pedagogical best practices (2017 — 2022)

They fail to provide feedback, scaffold effectively, grow self-efficacy, or develop mastery, often because learners struggle to effectively deploy their agency.

Learning APIs requires precise forms of knowledge that documentation often does not provide. (2004 — 2021)

APIs are often poorly designed, documented, and supported, making them difficult for developers to learn and use effectively. These studies reveal the particular forms of knowledge documentation and tutorials need to provide for people to successfully use APIs.

It is possible to mine, transform, and synthesize interfaces to serve new use cases and users (2015 — 2021)

Reasoning about user interfaces in probabalistic and formal ways can enable new forms of accessibility and productivity.

Studying programming requires human-centered methods (2006 — 2021)

Studying programming is hard. We invent new methods for studying programming, and reflect on the science of studying programming, to help accelerate progress on improving it.

Teaching program reading before writing can promote more robust learning (2011 — 2019)

This is because writing skills are dependent on reading skills. Unfortunately, learning to read code correctly can be boring.

Software engineering depends on information (2006 — 2019)

Through a series of studies, I uncovered the many ways that developers depend on information from people and systems to make engineering decisions, and how some of the most crucial information is hard or impossible to find.

Some defects can be found by operationalizing regularities in human expression (2005 — 2018)

Many defects in dynamically typed programs can be found by operationalizing simple observations about how people write code, often forgetting to close the loop that statically typed programs can easily point out.

Finding help with software can be as simple as pointing (2006 — 2015)

Pointing to user interface elements can be a powerfully discrminating input into help retrieval algorithms.

Programs can answer questions about their behavior (2004 — 2013)

I invented tools and algorithms for deriving 'why' and 'why not' questions from programs and automatically answering those questions, helping people efficiently and interactively debug the root causes of program failures.

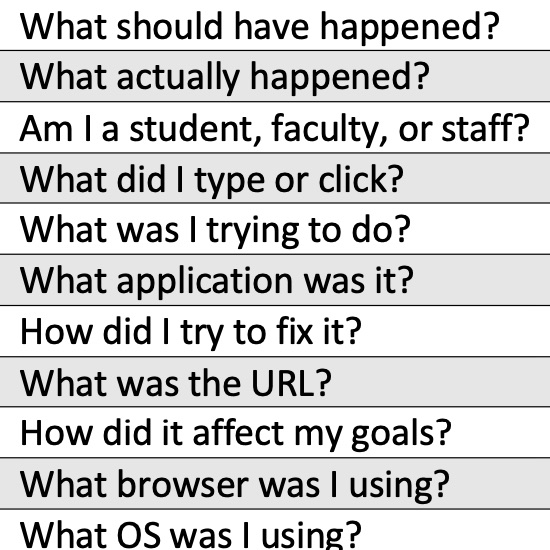

Bug reports are where developers and users negotiate what "defective" means (2003 — 2012)

The seemingly technical context of bug reports are where large communities of users and small teams of developers engage in power struggles about what software should and shouldn't do.

Design skills depend greatly on domain expertise (2006 — 2012)

We found through several studies that designers' productivity and careers are often limited by their lack of domain expertise.

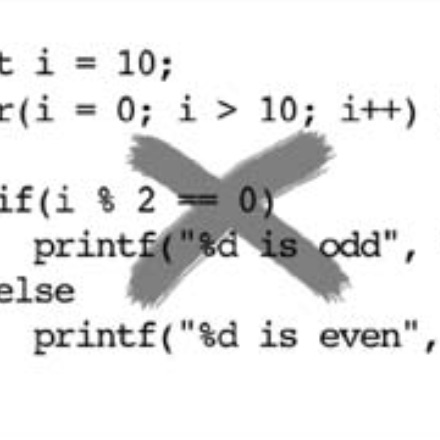

Defects emerge from the interaction of satisficing and state space complexity (2002 — 2005)

Much of my work during my dissertation examined where software failures come from; cognitive slips interact with the large state space that people create when programming to generate defects that are hard to localize.

Last updated 6/1/2026. To the extent

possible under law, Amy J. Ko has waived all copyright and related or neighboring rights to the design

and implementation of Amy's faculty site. This work is

published from the United States. See this site's GitHub repository🔗︎ to view source and provide feedback.

Last updated 6/1/2026. To the extent

possible under law, Amy J. Ko has waived all copyright and related or neighboring rights to the design

and implementation of Amy's faculty site. This work is

published from the United States. See this site's GitHub repository🔗︎ to view source and provide feedback.

Last updated 6/1/2026. To the extent

possible under law, Amy J. Ko has waived all copyright and related or neighboring rights to the design

and implementation of Amy's faculty site. This work is

published from the United States. See this site's GitHub repository🔗︎ to view source and provide feedback.

Last updated 6/1/2026. To the extent

possible under law, Amy J. Ko has waived all copyright and related or neighboring rights to the design

and implementation of Amy's faculty site. This work is

published from the United States. See this site's GitHub repository🔗︎ to view source and provide feedback.