Requirements

Once you have a problem, a solution, and a design specification, it’s entirely reasonable to start thinking about code. What libraries should we use? What platform is best? Who will build what? After all, there’s no better way to test the feasibility of an idea than to build it, deploy it, and find out if it works. Right?

It depends. This mentality towards product design works fine if building and deploying something is cheap and getting feedback has no consequences. Simple consumer applications often benefit from this simplicity, especially early stage ones, because there’s little to lose. For example, if you are starting a company, and do not even know if there is a market opportunity yet, it may be worth quickly prototyping an idea, seeing if there’s interest, and then later thinking about how to carefully architect a product that meets that opportunity. This is how products such as Facebook started , with a poorly implemented prototype that revealed an opportunity, which was only later translated into a functional, reliable software service.

However, what if prototyping a beta isn’t cheap to build? What if your product only has one shot at adoption? What if you’re building something for a client and they want to define success? Worse yet, what if your product could kill people if it’s not built properly? Consider the U.S. HealthCare.gov launch , for example, which was lambasted for its countless defects and poor scalability at launch, only working for 1,100 simultaneous users, when 50,000 were expected and 250,000 actually arrived. To prevent disastrous launches like this, software teams have to be more careful about translating a design specification into a specific explicit set of goals that must be satisfied in order for the implementation to be complete. We call these goals requirements and we call this process requirements engineering 7 .

In principle, requirements are a relatively simple concept. They are simply statements of what must be true about a system to make the system acceptable. For example, suppose you were designing an interactive mobile game. You might want to write the requirement The frame rate must never drop below 60 frames per second. This could be important for any number of reasons: the game may rely on interactive speeds, your company’s reputation may be for high fidelity graphics, or perhaps that high frame rate is key to creating a sense of realism. Or, imagine your game company has a reputation for high performance, high fidelity graphics, high frame rate graphics, and achieving any less would erode your company’s brand. Whatever the reasons, expressing it as a requirement makes it explicit that any version of the software that doesn’t meet that requirement is unacceptable, and sets a clear goal for engineering to meet.

The general idea of writing down requirements is actually a controversial one. Why not just discover what a system needs to do incrementally, through testing, user feedback, and other methods? Some of the original arguments for writing down requirements actually acknowledged that software is necessarily built incrementally, but that it is nevertheless useful to write down requirements from the outset 6 . This is because requirements help you plan everything: what you have to build, what you have to test, and how to know when you’re done. The theory is that by defining requirements explicitly, you plan, and by planning, you save time.

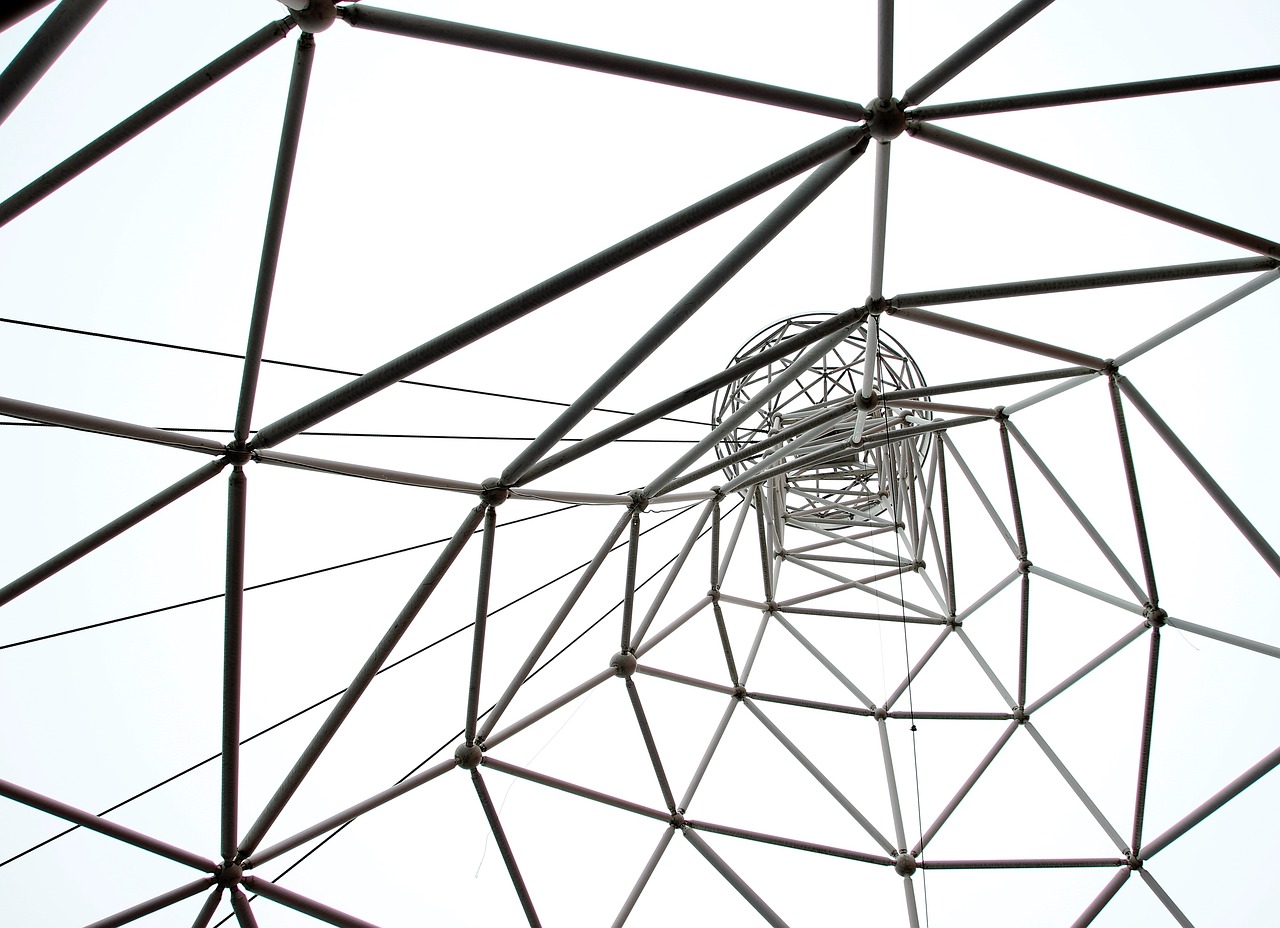

Do you really have to plan by writing down requirements? For example, why not do what designers do, expressing requirements in the form of prototypes and mockups? These implicitly state requirements, because they suggest what the software is supposed to do without saying it directly. But for some types of requirements, they actually imply nothing. For example, how responsive should a web page be? A prototype doesn’t really say; an explicit requirement of an average page load time of less than 1 second is quite explicit. Requirements can therefore be thought of more like an architect’s blueprint: they provide explicit definitions and scaffolding of project success.

And yet, like design, requirements come from the world and the people in it and not from software 2 . Because they come from the world, requirements are rarely objective or unambiguous. For example, some requirements come from law, such as the European Union’s General Data Protection Regulation GDPR regulation, which specifies a set of data privacy requirements that all software systems used by EU citizens must meet. Other requirements might come from public pressure for change, as in Twitter’s decision to label particular tweets as having false information or hate speech. Therefore, the methods that people use to do requirements engineering are quite diverse. Requirements engineers may work with lawyers to interpret policy. They might work with regulators to negotiate requirements. They might also use design methods, such as user research methods and rapid prototyping to iteratively converge toward requirements 3 . Therefore, the big difference between design and requirements engineering is that requirements engineers take the process one step further than designers, enumerating in detail every property that the software must satisfy, and engaging with every source of requirements a system might need to meet, not just user needs.

There are some approaches to specifying requirements formally . These techniques allow requirements engineers to automatically identify conflicting requirements, so they don’t end up proposing a design that can’t possibly exist. Some even use systems to make requirements traceable , meaning the high level requirement can be linked directly to the code that meets that requirement 4 . All of this formality has tradeoffs: not only does it take more time to be so precise, but it can negatively affect creativity in concept generation as well 5 .

Expressing requirements in natural language can mitigate these effects, at the expense of precision. They just have to be complete , precise , non-conflicting , and verifiable . For example, consider a design for a simple to do list application. Its requirements might be something like the following:

- Users must be able to add to-do list items with a single action.

- To-do list items must contain text and a binary completed state.

- Users must be able to edit the text of to-do list items.

- Users must be able to toggle the completed state of to-do list items.

- Users must be able to delete to-do list items.

- All changes made to the state of to-do list items must be saved automatically without user intervention.

Let’s review these requirements against the criteria for good requirements that I listed above:

- Is it complete ? I can think of a few more requirements: is the list ordered? How long does state persist? Are there user accounts? Where is data stored? What does it look like? What kinds of user actions must be supported? Is delete undoable? Even just on these completeness dimension, you can see how even a very simple application can become quite complex. When you’re generating requirements, your job is to make sure you haven’t forgotten important requirements.

- Is the list precise ? Not really. When you add a to do list item, is it added at the beginning? The end? Wherever a user request it be added? How long can the to do list item text be? Clearly the requirement above is imprecise. And imprecise requirements lead to imprecise goals, which means that engineers might not meet them. Is this to do list team okay with not meeting its goals?

- Are the requirements non-conflicting ? I think they are since they all seem to be satisfiable together. But some of the missing requirements might conflict. For example, suppose we clarified the imprecise requirement about where a to do list item is added. If the requirement was that it was added to the end, is there also a requirement that the window scroll to make the newly added to do item visible? If not, would the first requirement of making it possible for users to add an item with a single action be achievable? They could add it, but they wouldn’t know they had added it because of this usability problem, so is this requirement met? This example shows that reasoning through requirements is ultimately about interpreting words, finding source of ambiguity, and trying to eliminate them with more words.

- Finally, are they verifiable ? Some more than others. For example, is there a way to guarantee that the state saves successfully all the time? That may be difficult to prove given the vast number of ways the operating environment might prevent saving, such as a failing hard drive or an interrupted internet connection. This requirement might need to be revised to allow for failures to save, which itself might have implications for other requirements in the list.

Now, the flaws above don’t make the requirements “wrong”. They just make them “less good.” The more complete, precise, non-conflicting, and testable your requirements are, the easier it is to anticipate risk, estimate work, and evaluate progress, since requirements essentially give you a to do list for implementation and testing.

Lastly, remember that requirements are translated from a design, and designs have many more qualities than just completeness, preciseness, feasibility, and verifiability. Designs must also be legal, ethical, and just. Consider, for example, the anti-Black redlining practices pervasive throughout the United States. Even through the 1980’s, it was standard practice for banks to lend to lower-income white residents, but not Black residents, even middle-income or upper-income ones. Banks in the 1980’s wrote software to automate many lending decisions; would a software requirement such as this have been legal, ethical, or just?

No loan application with an applicant self-identified as a person of color should be approved.

That requirement is both precise and verifiable. In the 1980’s, it was legal. But was it ethical or just? Absolutely not. Therefore, requirements, no matter how formally extracted from a design specification, no matter how consistent with law, and no matter how aligned with an organization’s priorities, should be free of racist ideas. Requirements are just one of many ways that such ideas are manifested, and ultimately hidden in code 1 .

References

-

Ruha Benjamin (2019). Race after technology: Abolitionist tools for the New Jim Code. Polity Books.

-

Michael Jackson (2001). Problem frames. Addison-Wesley.

-

Axel van Lamsweerde (2008). Requirements engineering: from craft to discipline. ACM SIGSOFT Foundations of Software Engineering (FSE).

-

Patrick Mäder, Alexander Egyed (2015). Do developers benefit from requirements traceability when evolving and maintaining a software system?. Empirical Software Engineering.

-

Rahul Mohanani, Paul Ralph, and Ben Shreeve (2014). Requirements fixation. ACM/IEEE International Conference on Software Engineering.

-

David L Parnas, Paul C. Clements (1986). A rational design process: How and why to fake it. IEEE Transactions on Software Engineering.

-

Ian Sommerville, Pete Sawyer (1997). Requirements engineering: a good practice guide. John Wiley & Sons, Inc.