History

Computers haven’t been around for long. If you read one of the many histories of computing and information, such as James Gleick’s The Information 4 , Jonathan Grudin’s From Tool to Partner: The Evolution of Human-Computer Interaction 5 , or Margo Shetterly’s Hidden Figures 11 , you’ll learn that before digital computers, computers were people, calculating things manually. And that after digital computers, programming wasn’t something that many people did. It was reserved for whoever had access to the mainframe and they wrote their programs on punchcards. Computing was in no way a ubiquitous, democratized activity—it was reserved for the few that could afford and maintain a room-sized machine.

Because programming required such painstaking planning in machine code and computers were slow, most programs were not that complex. Their value was in calculating things faster than a person could do by hand, which meant thousands of calculations in a minute rather than one calculation in a minute. Computer programmers were not solving problems that had no solutions yet; they were translating existing solutions (for example, a quadratic formula) into machine instructions. Their power wasn’t in creating new realities or facilitating new tasks, it was accelerating old tasks.

The birth of software engineering, therefore, did not come until programmers started solving problems that didn’t have existing solutions, or were new ideas entirely. Most of these were done in academic contexts to develop things like basic operating systems and methods of input and output. These were complex projects, but as research, they didn’t need to scale; they just needed to work. It wasn’t until the late 1960s when the first truly large software projects were attempted commercially, and software had to actually perform.

The IBM 360 operating system was one of the first big projects of this kind. Suddenly, there were multiple people working on multiple components, all which interacted with one another. Each part of the program needed to coordinate with the others, which usually meant that each part’s authors needed to coordinate, and the term software engineering was born. Programmers and academics from around the world, especially those who were working on big projects, created conferences so they could meet and discuss their challenges. In the first software engineering conference in 1968, attendees speculated about why projects were shipping late, why they were over budget, and what they could do about it. There was now a phrase for “software engineering” to organize around, but many questions, but few answers.

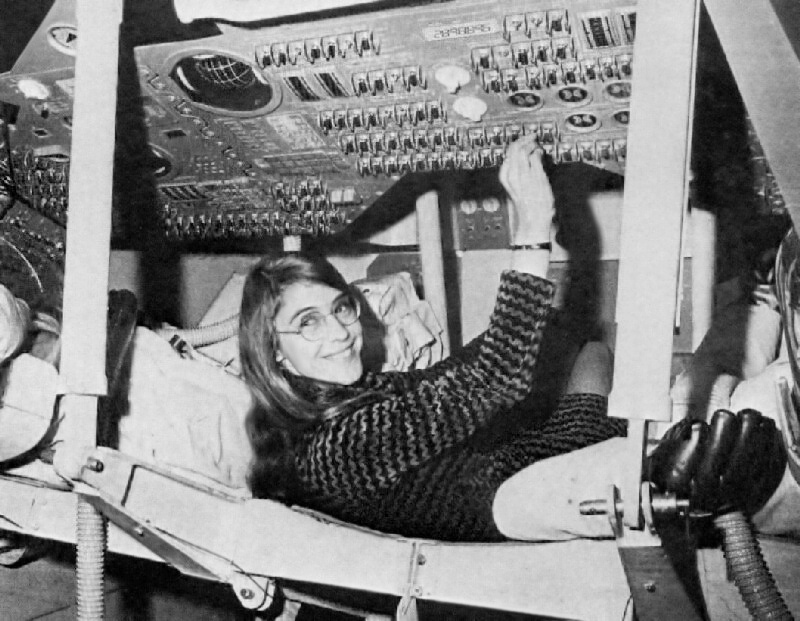

At the time, one of the key people pursuing these answers was Margaret Hamilton , a computer scientist who was Director of the Software Engineering Division of the MIT Instrumentation Laboratory. One of the lab’s key projects in the late 1960’s was developing the on-board flight software for the Apollo space program. Hamilton led the development of error detection and recovery, the information displays, the lunar lander, and many other critical components, while managing a team of other computer scientists who helped. It was as part of this project that many of the central problems in software engineering began to emerge, including verification of code, coordination of teams, and managing versions. This led to one of her passions, which was giving software legitimacy as a form of engineering—at the time, it was viewed as routine, uninteresting, and simple work. Her leadership in the field established the field as a core part of systems engineering.

The first conference, the IBM 360 project, and Hamilton’s experiences on the Apollo mission identified many problems that had no clear solutions:

- When you’re solving a problem that doesn’t yet have a solution, what is a good process for building a solution?

- When software does so many different things, how can you know software “works”?

- How can you make progress when no one on the team understands every part of the program?

- When people leave a project, how do you ensure their replacement has all of the knowledge they had?

- When no one understands every part of the program, how do you diagnose defects?

- When people are working in parallel, how do you prevent them from clobbering each other’s work?

- If software engineering is about more than coding, what skills does a good coder need to have?

- What kinds of tools and languages can accelerate a programmer’s work and help them prevent mistakes?

- How can projects not lose sight of the immense complexity of human needs, values, ethics, and policy that interact with engineering decisions?

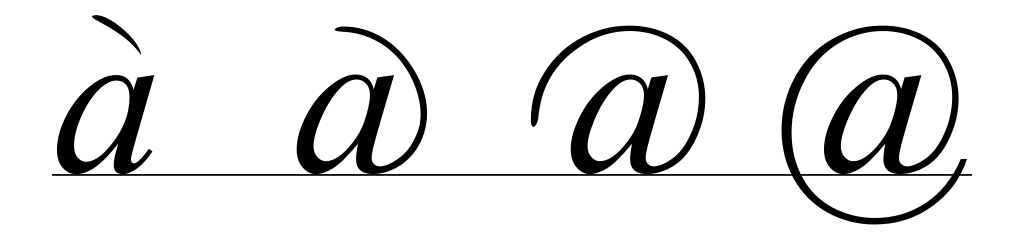

As it became clear that software was not an incremental change in technology, but a profoundly disruptive one, countless communities began to explore these questions in research and practice. Black American entrepreneurs began to explore how to use software to connect and build community well before the internet was ubiquitous, creating some of the first web-scale online communities and forging careers at IBM, ultimately to be suppressed by racism in the workplace and society 9 . White entrepreneurs in Silicon Valley began to explore ways to bring computing to the masses, bolstered by the immense capital investments of venture capitalists, who saw opportunities for profit through disruption 7 . And academia, which had helped demonstrate the feasibility of computing and established its foundations, began to invent the foundational tools of software engineering including, version control systems, software testing, and a wide array of high-level programming languages such as Fortran 10 , LISP 8 , C++ 12 and Smalltalk 6 , all of which inspired the design of today’s most popular languages, including Java, Python, and JavaScript. And throughout, despite the central role of women in programming the first digital computers, managing the first major software engineering projects, and imagining how software could change the world, women were systematically excluded from all of these efforts, their histories forgotten, erased, and overshadowed by pervasive sexism in commerce and government 1 .

While technical progress has been swift, progress on the human aspects of software engineering, have been more difficult to understand and improve. One of the seminal books on these issues was Fred P. Brooks, Jr.’s The Mythical Man Month 3 . In it, he presented hundreds of claims about software engineering. For example, he hypothesized that adding more programmers to a project would actually make productivity worse at some level, not better, because knowledge sharing would be an immense but necessary burden. He also claimed that the first implementation of a solution is usually terrible and should be treated like a prototype: used for learning and then discarded. These and other claims have been the foundation of decades of years of research, all in search of some deeper answer to the questions above. And only recently have scholars begun to reveal how software and software engineering tends to encode, amplify, and reinforce existing structures and norms of discrimination by encoding it into data, algorithms, and software architectures 2 . These histories show that, just like any other human activity, there are strong cultural forces that shape how people engineer software together, what they engineer, and what affect that has on society.

If we step even further beyond software engineering as an activity and think more broadly about the role that software is playing in society today, there are also other, newer questions that we’ve only begun to answer. If every part of society now runs on code, what responsibility do software engineers have to ensure that code is right? What responsibility do software engineers have to avoid algorithmic bias? If our cars are to soon drive us around, who’s responsible for the first death: the car, the driver, the software engineers who built it, or the company that sold it? These ethical questions are in some ways the future of software engineering, likely to shape its regulatory context, its processes, and its responsibilities.

There are also economic roles that software plays in society that it didn’t before. Around the world, software is a major source of job growth, but also a major source of automation, eliminating jobs that people used to do. These larger forces that software is playing on the world demand that software engineers have a stronger understanding of the roles that software plays in society, as the decisions that engineers make can have profoundly impactful unintended consequences.

We’re nowhere close to having deep answers about these questions, neither the old ones or the new ones. We know a lot about programming languages and a lot about testing. These are areas amenable to automation and so computer science has rapidly improved and accelerated these parts of software engineering. The rest of it, as we shall see, has not made much progress. In this book, we’ll discuss what we know and the much larger space of what we don’t.

References

-

Janet Abbate (2012). Recoding gender: women's changing participation in computing. MIT Press.

-

Ruha Benjamin (2019). Race after technology: Abolitionist tools for the New Jim Code. Polity Books.

-

Fred P. Brooks (1995). The mythical man month. Pearson Education.

-

James Gleick (2011). The Information: A History, A Theory, A Flood. Pantheon Books.

-

Grudin, Jonathan (2017). From Tool to Partner: The Evolution of Human-Computer Interaction. Source.

-

Alan C. Kay (1996). The early history of Smalltalk. History of programming languages II.

-

Kenney, M (2000). Understanding Silicon Valley: The anatomy of an entrepreneurial region. Stanford University Press.

-

John McCarthy (1978). History of LISP. History of Programming Languages I.

-

Charlton D. McIlwain (2019). Black software: the internet and racial justice, from the AfroNet to Black Lives Matter. Oxford University Press.

-

Michael Metcalf (2002). History of Fortran. ACM SIGPLAN Fortran Forum.

-

Margot Lee Shetterly (2017). Hidden figures: the American dream and the untold story of the Black women mathematicians who helped win the space race. HarperCollins Nordic.

-

Bjarn Stroustrup, B (1996). A history of C++: 1979--1991. History of programming languages II.

Organizations

The photo above is a candid shot of some of the software engineers of AnswerDash , a company I co-founded in 2012, that was later acquired in 2020. There are a few things to notice in the photograph. First, you see one of the employees explaining something, while others are diligently working off to the side. It’s not a huge team; just a few engineers, plus several employees in other parts of the organization in another room. This, as simple as it looks, is pretty much what all software engineering work looks like. Some organizations have one of these teams; others have thousands.

What you can’t see is just how much complexity underlies this work. You can’t see the organizational structures that exist to manage this complexity. Inside this room and the rooms around it were processes, standards, reviews, workflows, managers, values, culture, decision making, analytics, marketing, sales. And at the center of it were people executing all of these things as well as they could to achieve the organization’s goal.

Organizations are a much bigger topic than I could possibly address here. To deeply understand them, you’d need to learn about organizational studies , organizational behavior , information systems , and business in general.

The subset of this knowledge that’s critical to understand about software engineering is limited to a few important concepts. The first and most important concept is that even in software organizations, the point of the company is rarely to make software; it’s to provide value 8 . Software is sometimes the central means to providing that value, but more often than not, it’s the information flowing through that software that’s the truly valuable piece. Requirements , which we will discuss in a later chapter, help engineers organize how software will provide value.

The individuals in a software organization take on different roles to achieve that value. These roles are sometimes spread across different people and sometimes bundled up into one person, depending on how the organization is structured, but the roles are always there. Let’s go through each one in detail so you understand how software engineers relate to each role.

- Marketers look for opportunities to provide value. In for-profit businesses, this might mean conducting market research, estimating the size of opportunities, identifying audiences, and getting those audiences’ attention. Non-profits need to do this work as well in order to get their solutions to people, but may be driven more by solving problems than making money.

- Product managers decide what value the product will provide, monitoring the marketplace and prioritizing work.

- Designers decide how software will provide value. This isn’t about code or really even about software; it’s about envisioning solutions to problems that people have.

- Software engineers write code with other engineers to implement requirements envisioned by designers. If they fail to meet requirements, the design won’t be implemented correctly, which will prevent the software from providing value.

- Sales takes the product that’s been built and tries to sell it to the audiences that marketers have identified. They also try to refine the organization’s understanding of what the customer wants and needs, providing feedback to marketing, product, and design, which engineers then address.

- Support helps the people using the product to use it successfully and, like sales, provides feedback to product, design, and engineering about the product’s value (or lack thereof) and its defects.

As I noted above, sometimes the roles above get merged into individuals. When I was CTO at AnswerDash, I had software engineering roles, design roles, product roles, sales roles, and support roles. This was partly because it was a small company when I was there. As organizations grow, these roles tend to be divided into smaller pieces. This division often means that different parts of the organization don’t share knowledge, even when it would be advantageous 3 .

Note that in the division of responsibilities above, software engineers really aren’t the designers by default. They don’t decide what product is made or what problems that product solves. They may have opinions—and a great deal of power to enforce their opinions, as the people building the product—but it’s not ultimately their decision.

There are other roles you might be thinking of that I haven’t mentioned:

- Engineering managers exist in all roles when teams get to a certain size, helping to move information from between higher and lower parts of an organization. Even engineering managers are primarily focused on organizing and prioritizing work, and not doing engineering 5 . Much of their time is spent ensuring every engineer has what they need to be productive, while also managing coordination and interpersonal conflict between engineers.

- Data scientists , although a new role, typically facilitate decision making on the part of any of the roles above 1 . They might help engineers find bugs, marketers analyze data, track sales targets, mine support data, or inform design decisions. They’re experts at using data to accelerate and improve the decisions made by the roles above.

- Researchers , also called user researchers, also help people in a software organization make decisions, but usually product decisions, helping marketers, sales, and product managers decide what products to make and who would want them. In many cases, they can complement the work of data scientists, providing qualitative work to triangulate quantitative data .

- Ethics and policy specialists , who might come with backgrounds in law, policy, or social science, might shape terms of service, software licenses, algorithmic bias audits, privacy policy compliance, and processes for engaging with stakeholders affected by the software being engineered. Any company that works with data, especially those that work with data at large scales or in contexts with great potential for harm, hate, and abuse, needs significant expertise to anticipate and prevent harm from engineering and design decisions.

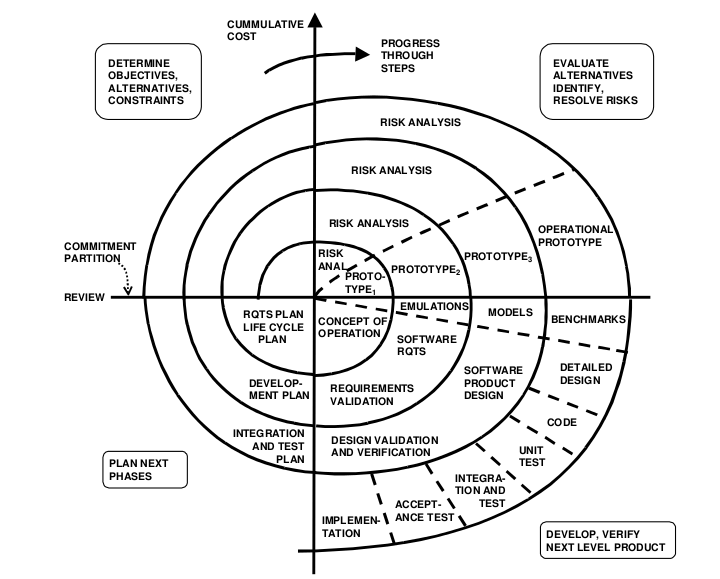

Every decision made in a software team is under uncertainty, and so another important concept in organizations is risk 2 . It’s rarely possible to predict the future, and so organizations must take risks. Much of an organization’s function is to mitigate the consequences of risks. Data scientists and researchers mitigate risk by increasing confidence in an organization’s understanding of the market and its consumers. Engineers manage risk by trying to avoid defects. Of course, as many popular outlets on software engineering have begun to discover, when software fails, it usually “did exactly what it was told to do.” The reason it failed is that it was told to do the wrong thing. 10

Open source communities are organizations too. The core activities of design, engineering, and support still exist in these, but how much a community is engaged in marketing and sales depends entirely on the purpose of the community. Big, established open source projects like Mozilla have revenue, buildings, and a CEO, and while they don’t sell anything, they do market. Others like Linux 6 rely heavily on contributions both from volunteers 11 , but also paid employees from companies that depend on Linux, like IBM, Google, and others. In these settings, there are still all of the challenges that come with software engineering, but fewer of the constraints that come from a for-profit or non-profit motive. In fact, recent work empirically uncovered 9 reasons why modern open source projects fail: 1) lost to competition, 2) made obsolete by technology advances, 3) lack of time to volunteer, 4) lack of interest by contributors, 5) outdated technologies, 6) poor maintainability, 7) interpersonal conflicts amongst developers, 8) legal challenges, and 9) acquisition 4 . Another study showed that funding open source projects often requires substantial donations from large corporations; most projects don’t ask for donations, and those that do receive very little unless they’re well-established, and most of those funds go to paying for basic expenses such as engineering salaries 9 . Those aren’t too different from traditional software organizations, aside from the added challenges of sustaining a volunteer workforce.

All of the above has some important implications for what it means to be a software engineer:

- Engineers are not the only important role in a software organization. In fact, they may be less important to an organization’s success than other roles because the decisions they make (how to implement requirements) have a smaller impact on the organization’s goals than other decisions (what to make, who to sell it to, etc.).

- Engineers have to work with a lot of people working with different roles. Learning what those roles are and what shapes their success is important to being a good collaborator 7 .

- While engineers might have many great product ideas, if they really want to shape what they’re building, they should be in a product role, not an engineering role.

All that said, products wouldn’t exist without engineers. They ensure that every detail about a product reflects the best knowledge of the people in their organization, and so attention to detail is paramount. In future chapters, we’ll discuss all of the ways that software engineers manage this detail, mitigating the burden on their memories with tools and processes.

References

-

Andy Begel, Thomas Zimmermann (2014). Analyze this! 145 questions for data scientists in software engineering. ACM/IEEE International Conference on Software Engineering.

-

Boehm, B. W (1991). Software risk management: principles and practices. IEEE Software.

-

Parmit K. Chilana, Amy J. Ko, Jacob O. Wobbrock, Tovi Grossman, and George Fitzmaurice (2011). Post-deployment usability: a survey of current practices. ACM SIGCHI Conference on Human Factors in Computing (CHI).

-

Jailton Coelho and Marco Tulio Valente (2017). Why modern open source projects fail. ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE).

-

Eirini Kalliamvakou, Christian Bird, Thomas Zimmermann, Andrew Begel, Robert DeLine, Daniel M. German (2017). What makes a great manager of software engineers?. IEEE Transactions on Software Engineering.

-

Gwendolyn K. Lee, Robert E. Cole (2003). From a firm-based to a community-based model of knowledge creation: The case of the Linux kernel development. Organization science.

-

Paul Luo Li, Amy J. Ko, and Andrew Begel (2017). Cross-disciplinary perspectives on collaborations with software engineers. International Workshop on Cooperative and Human Aspects of Software Engineering.

-

Alexander Osterwalder, Yves Pigneur, Gregory Bernarda, Alan Smith (2015). Value proposition design: how to create products and services customers want. John Wiley & Sons.

-

Cassandra Overney, Jens Meinicke, Christian Kästner, Bogdan Vasilescu (2020). How to not get rich: an empirical study of donations in open source. ACM/IEEE International Conference on Software Engineering.

-

James Somers (2017). The coming software apocalypse. The Atlantic Monthly.

-

Yunwen Ye and Kouichi Kishida (2003). Toward an understanding of the motivation Open Source Software developers. ACM/IEEE International Conference on Software Engineering.

Communication

Because software engineering often times distributes work across multiple people, a fundamental challenge in software engineering is ensuring that everyone on a team has the same understanding of what is being built and why. In the seminal book The Mythical Man Month , Fred Brooks argued that good software needs to have conceptual integrity , both in how it is designed, but also how it is implemented 5 . This is the idea that whatever vision of what is being built must stay intact, even as the building of it gets distributed to multiple people. When multiple people are responsible for implementing a single coherent idea, how can they ensure they all build the same idea?

The solution is effective communication. As some events in industry have shown, communication requires empathy and teamwork. When communication is poor, teams become disconnected and produce software defects 4 . Therefore, achieving effective communication practices is paramount.

It turns out, however, that communication plays such a powerful role in software projects that it even shapes how projects unfold. Perhaps the most notable theory about the effect of communication is Conway’s Law 6 . This theory argues that any designed system—software included—will reflect the communication structures involved in producing it. For example, think back to any course project where you divided the work into chunks and tried to combine them together into a final report at the end. The report and its structure probably mirrored the fact that several distinct people worked on each section of the report, rather than sounding like a single coherent voice. The same things happen in software: if the team writing error messages for a website isn’t talking to the team presenting them, you’re probably going to get a lot of error messages that aren’t so clear, may not fit on screen, and may not be phrased using the terminology of the rest of the site. On the other hand, if those two teams meet regularly to design the error messages together, communicating their shared knowledge, they might produce a seamless, coherent experience. Not only does software follow this law when a project is created, they also follow this law as projects evolve over time 18 .

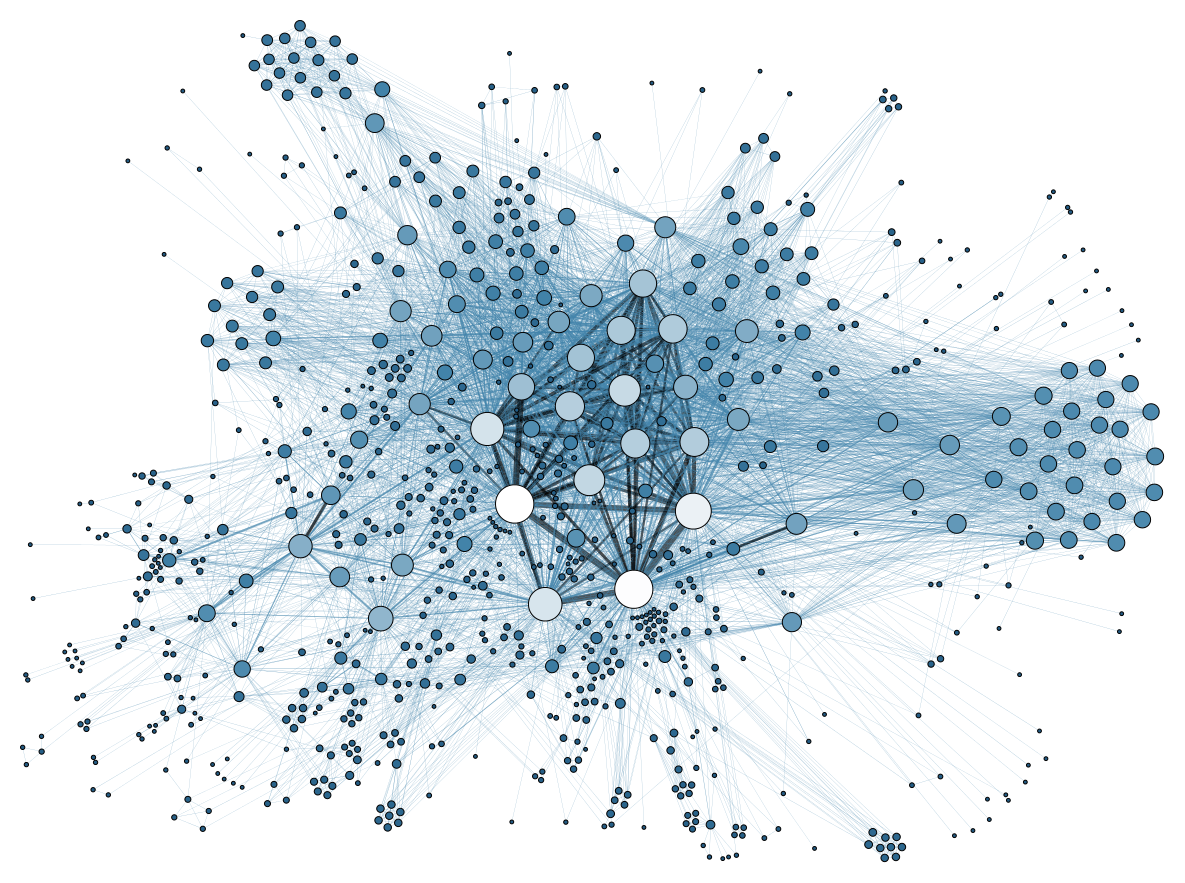

Because communication is so central, software engineers are constantly seeking information to further their work, going to their coworkers’ desks, emailing them, chatting via messaging platforms, and even using social media 8 . Some of the information that developers are seeking is easier to find than others. For example, in the study I just cited, it was pretty trivial to find information about who wrote a line of code or whether a build was done, but when the information they needed resided in someone else’s head (e.g., why a particular line of code was written), it was slow or often impossible to retrieve it. Sometimes it’s not even possible to find out who has the information. Researchers have investigated tools for trying to quantify expertise by automatically analyzing the code that developers have written, building platforms to help developers search for other developers who might know what they need to know 2,12 .

Communication is not always effective. In fact, there are many kinds of communication that are highly problematic in software engineering teams. For example, Perlow 13 conducted an ethnography of one team and found a highly dysfunctional use of interruptions in which the most expert members of a team were constantly interrupted to “fight fires” (immediately address critical problems) in other parts of the organization, and then the organization rewarded them for their heroics. This not only made the most expert engineers less productive, but it also disincentivized the rest of the organization to find effective ways of preventing the disasters from occurring in the first place. Not all interruptions are bad, and they can increase productivity, but they do increase stress 10 .

Communication isn’t just about transmitting information; it’s also about relationships and identity. For example, the dominant culture of many software engineering work environments—and even the perceived culture—is one that can deter many people from even pursuing careers in computer science. Modern work environments are still dominated by men, who speak loudly, out of turn, and disrespectfully, with sometimes even sexual harassment 16 . Computer science as a discipline, and the software industry that it shapes, has only just begun to consider the urgent need for cultural competence (the ability for individuals and organizations to work effectively when their employee’s thoughts, communications, actions, customs, beliefs, values, religions, and social groups vary) 17 . Similarly, software developers often have to work with people in other domains such as artists, content developers, data scientists, design researchers, designers, electrical engineers, mechanical engineers, product planners, program managers, and service engineers. One study found that developers’ cross-disciplinary collaborations with people in these other domains required open-mindedness about the input of others, proactively informing everyone about code-related constraints, and ultimately seeing the broader picture of how pieces from different disciplines fit together; when developers didn’t do these things, collaborations failed, and therefore projects failed 9 . These are not the conditions for trusting, effective communication.

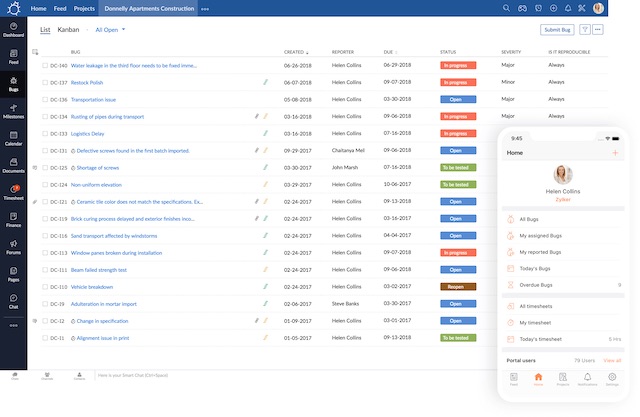

When communication is effective, it still takes time. One of the key strategies for reducing the amount of communication necessary is knowledge sharing tools, which broadly refers to any information system that stores facts that developers would normally have to retrieve from a person. By storing them in a database and making them easy to search, teams can avoid interruptions. The most common knowledge sharing tools in software teams are issue trackers, which are often at the center of communication not only between developers, but also with every other part of a software organization 3 . Community portals, such as GitHub pages or Slack teams, can also be effective ways of sharing documents and archiving decisions 15 . Perhaps the most popular knowledge sharing tool in software engineering today is Stack Overflow 1 , which archives facts about programming language and API usage. Such sites, while they can be great resources, have the same problems as many media, such as gender bias that prevent contributions from women from being rewarded as highly as contributions from men 11 .

Because all of this knowledge is so critical to progress, when developers leave an organization and haven’t archived their knowledge somewhere, it can be quite disruptive to progress. Organizations often have single points of failure, in which a single developer may be critical to a team’s ability to maintain and enhance a software product 14 . When newcomers join a team and lack the right knowledge, they introduce defects 7 . Some companies try to mitigate this by rotating developers between projects, “cross-training” them to ensure that the necessary knowledge to maintain a project is distributed across multiple engineers.

What does all of this mean for you as an individual developer? To put it simply, don’t underestimate the importance of talking. Know who you need to talk to, talk to them frequently, and to the extent that you can, write down what you know both to lessen the demand for talking and mitigate the risk of you not being available, but also to make your knowledge more precise and accessible in the future. It often takes decades for engineers to excel at communication. The very fact that you know why communication is important gives you a critical head start.

References

-

Jeff Atwood (2016). The state of programming with Stack Overflow co-founder Jeff Atwood. Software Engineering Daily Podcast.

-

Andrew Begel, Yit Phang Khoo, and Thomas Zimmermann (2010). Codebook: discovering and exploiting relationships in software repositories. ACM/IEEE International Conference on Software Engineering.

-

Dane Bertram, Amy Voida, Saul Greenberg, and Robert Walker (2010). Communication, collaboration, and bugs: the social nature of issue tracking in small, collocated teams. ACM Conference on Computer Supported Cooperative Work (CSCW).

-

Nicolas Bettenburg, Ahmed E. Hassan (2013). Studying the impact of social interactions on software quality. Empirical Software Engineering.

-

Fred P. Brooks (1995). The mythical man month. Pearson Education.

-

Melvin E. Conway (1968). How do committees invent. Datamation.

-

Matthieu Foucault, Marc Palyart, Xavier Blanc, Gail C. Murphy, and Jean-Rémy Falleri (2015). Impact of developer turnover on quality in open-source software. ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE).

-

Amy J. Ko, Rob DeLine, and Gina Venolia (2007). Information needs in collocated software development teams. ACM/IEEE International Conference on Software Engineering.

-

Paul Luo Li, Amy J. Ko, and Andrew Begel (2017). Cross-disciplinary perspectives on collaborations with software engineers. International Workshop on Cooperative and Human Aspects of Software Engineering.

-

Gloria Mark, Daniela Gudith, and Ulrich Klocke (2008). The cost of interrupted work: more speed and stress. ACM SIGCHI Conference on Human Factors in Computing (CHI).

-

Anna May, Johannes Wachs, Anikó Hannák (2019). Gender differences in participation and reward on Stack Overflow. Empirical Software Engineering.

-

Audris Mockus and James D. Herbsleb (2002). Expertise browser: a quantitative approach to identifying expertise. ACM/IEEE International Conference on Software Engineering.

-

Leslie A. Perlow (1999). The time famine: Toward a sociology of work time. Administrative science quarterly.

-

Peter C. Rigby, Yue Cai Zhu, Samuel M. Donadelli, and Audris Mockus (2016). Quantifying and mitigating turnover-induced knowledge loss: case studies of chrome and a project at Avaya. ACM/IEEE International Conference on Software Engineering.

-

Christoph Treude and Margaret-Anne Storey (2011). Effective communication of software development knowledge through community portals. ACM SIGSOFT Foundations of Software Engineering (FSE).

-

Jennifer Wang' (2016). Female pursuit of Computer Science with Jennifer Wang. Software Engineering Daily Podcast.

-

Alicia Nicki Washington (2020). When twice as good isn't enough: the case for cultural competence in computing. ACM Technical Symposium on Computer Science Education.

-

Minghui Zhou and Audris Mockus (2011). Does the initial environment impact the future of developers?. ACM/IEEE International Conference on Software Engineering.

Productivity

When we think of productivity, we usually have a vague concept of a rate of work per unit time. Where it gets tricky is in defining “work”. On an individual level, work can be easier to define, because developers often have specific concrete tasks that they’re assigned. But until a developer is done with a task, it’s not really easy to define progress (well, it’s not that easy to define “done” either, but that’s a topic for a later chapter). When you start considering work at the scale of a team or an organization, productivity gets even harder to define, since an individual’s productivity might be increased by ignoring every critical request from a teammate, harming the team’s overall productivity.

Despite the challenge in defining productivity, there are numerous factors that affect productivity. For example, at the individual level, having the right tools can result in an order of magnitude difference in speed at accomplishing a task. One study I ran found that developers using the Eclipse IDE spent a third of their time just physically navigating between source files 8 . With the right navigation aids, developers could be writing code and fixing bugs 30% faster. In fact, some tools like Mylyn automatically bring relevant code to the developer rather than making them navigate to it, greatly increasing the speed which with developers can accomplish a task 6 . Long gone are the days when developers should be using bare command lines and text editors to write code: IDEs can and do greatly increase productivity when used and configured with speed in mind.

Of course, individual productivity is about more than just tools. Studies of workplace productivity show that developers have highly fragmented days, interrupted by meetings, emails, coding, and non-work distractions 13 . These interruptions are often viewed negatively from an individual perspective 14 , but may be highly valuable from a team and organizational perspective. And then, productivity is not just about skills to manage time, but also many other skills that shape developer expertise, including skills in designing architectures, debugging, testing, programming languages, etc. 1 Hiring is therefore about far more than just how quickly and effectively someone can code 2 .

That said, productivity is not just about individual developers. Because communication is a key part of team productivity, an individual’s productivity is as much determined by their ability to collaborate and communicate with other developers. In a study spanning dozens of interviews with senior software engineers, Li et al. found that the majority of critical attributes for software engineering skill (productivity included) concerned their interpersonal skills, their communication skills, and their ability to be resourceful within their organization 10 . Similarly, LaToza et al. found that the primary bottleneck in productivity was communication with teammates, primarily because waiting for replies was slower than just looking something up 9 . Of course, looking something up has its own problems. While StackOverflow is an incredible resource for missing documentation 11 , it also is full of all kinds of misleading and incorrect information contributed by developers without sufficient expertise to answer questions 3 . Finally, because communication is such a critical part of retrieving information, adding more developers to a team has surprising effects. One study found that adding people to a team slowly enough to allow them to onboard effectively could reduce defects, but adding them too fast led to increases in defects 12 .

Another dimension of productivity is learning. Great engineers are resourceful, quick learners 10 . New engineers must be even more resourceful, even though their instincts are often to hide their lack of expertise from exactly the people they need help from 4 . Experienced developers know that learning is important and now rely heavily on social media such as Twitter to follow industry changes, build learning relationships, and discover new concepts and platforms to learn 16 . And, of course, developers now rely heavily on web search to fill in inevitable gaps in their knowledge about APIs, error messages, and myriad other details about languages and platforms 18 .

Unfortunately, learning is no easy task. One of my earliest studies as a researcher investigated the barriers to learning new programming languages and systems, finding six distinct types of content that are challenging 7 . To use a programming platform successfully, people need to overcome design barriers, which are the abstract computational problems that must be solved, independent of the languages and APIs. People need to overcome selection barriers, which involve finding the right abstractions or APIs to achieve the design they have identified. People need to overcome use and coordination barriers, which involve operating and coordinating different parts of a language or API together to achieve novel functionality. People need to overcome comprehension barriers, which involve knowing what can go wrong when using part of a language or API. And finally, people need to overcome information barriers, which are posed by the limited ability of tools to inspect a program’s behavior at runtime during debugging. Every single one of these barriers has its own challenges, and developers encounter them every time they are learning a new platform, regardless of how much expertise they have.

Aside from individual and team factors, productivity is also influenced by the particular features of a project’s code, how the project is managed, or the environment and organizational culture in which developers work 5,17 . In fact, these might actually be the biggest factors in determining developer productivity. This means that even a developer that is highly productive individually cannot rescue a team that is poorly structured working on poorly architected code. This might be why highly productive developers are so difficult to recruit to poorly managed teams.

A different way to think about productivity is to consider it from a “waste” perspective, in which waste is defined as any activity that does not contribute to a product’s value to users or customers. Sedano et al. investigated this view across two years and eight software development projects in a software development consultancy 15 , contributing a taxonomy of waste:

- Building the wrong feature or product . The cost of building a feature or product that does not address user or business needs.

- Mismanaging the backlog . The cost of duplicating work, expediting lower value user features, or delaying necessary bug fixes.

- Rework . The cost of altering delivered work that should have been done correctly but was not.

- Unnecessarily complex solutions . The cost of creating a more complicated solution than necessary, a missed opportunity to simplify features, user interface, or code.

- Extraneous cognitive load . The costs of unneeded expenditure of mental energy, such as poorly written code, context switching, confusing APIs, or technical debt.

- Psychological distress . The costs of burdening the team with unhelpful stress arising from low morale, pace, or interpersonal conflict.

- Waiting/multitasking . The cost of idle time, often hidden by multi-tasking, due to slow tests, missing information, or context switching.

- Knowledge loss . The cost of re-acquiring information that the team once knew.

- Ineffective communication . The cost of incomplete, incorrect, misleading, inefficient, or absent communication.

One could imagine using these concepts to refine processes and practices in a team, helping both developers and managers be more aware of sources of waste that harm productivity.

Of course, productivity is not only shaped by professional and organizational factors, but personal ones as well. Consider, for example, an engineer that has friends, wealth, health care, health, stable housing, sufficient pay, and safety: they likely have everything they need to bring their full attention to their work. In contrast, imagine an engineer that is isolated, has immense debt, has no health care, has a chronic disease like diabetes, is being displaced from an apartment by gentrification, has lower pay than their peers, or does not feel safe in public. Any one of these factors might limit an engineer’s ability to be productive at work; some people might experience multiple, or even all of these factors, especially if they are a person of color in the United States, who has faced a lifetime of racist inequities in school, health care, and housing. Because of the potential for such inequities to influence someone’s ability to work, managers and organizations need to make space for surfacing these inequities at work, so that teams can acknowledge them, plan around them, and ideally address them through targeted supports. Anything less tends to make engineers feel unsupported, which will only decrease their motivation to contribute to a team. These widely varying conceptions of productivity reveal that programming in a software engineering context is about far more than just writing a lot of code. It’s about coordinating productively with a team, synchronizing your work with an organization’s goals, and most importantly, reflecting on ways to change work to achieve those goals more effectively.

References

-

Sebastian Baltes, Stephan Diehl (2018). Towards a theory of software development expertise. ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE).

-

Ammon Bartram (2016). Hiring engineers with Ammon Bartram. Software Engineering Daily Podcast.

-

Anton Barua, Stephen W. Thomas & Ahmed E. Hassan (2014). What are developers talking about? An analysis of topics and trends in Stack Overflow. Empirical Software Engineering.

-

Andy Begel, Beth Simon (2008). Novice software developers, all over again. ICER.

-

Tom DeMarco and Tim Lister (1985). Programmer performance and the effects of the workplace. ACM/IEEE International Conference on Software Engineering.

-

Mik Kersten and Gail C. Murphy (2006). Using task context to improve programmer productivity. ACM SIGSOFT Foundations of Software Engineering (FSE).

-

Amy J. Ko, Brad A. Myers, Htet Htet Aung (2004). Six learning barriers in end-user programming systems. IEEE Symposium on Visual Languages and Human-Centric Computing (VL/HCC).

-

Amy J. Ko, Htet Htet Aung, Brad A. Myers (2005). Eliciting design requirements for maintenance-oriented IDEs: a detailed study of corrective and perfective maintenance tasks. ACM/IEEE International Conference on Software Engineering.

-

Thomas D. LaToza, Gina Venolia, and Robert DeLine (2006). Maintaining mental models: a study of developer work habits. ACM/IEEE International Conference on Software Engineering.

-

Paul Luo Li, Amy J. Ko, and Jiamin Zhu (2015). What makes a great software engineer?. ACM/IEEE International Conference on Software Engineering.

-

Lena Mamykina, Bella Manoim, Manas Mittal, George Hripcsak, and Björn Hartmann (2011). Design lessons from the fastest Q&A site in the west. ACM SIGCHI Conference on Human Factors in Computing (CHI).

-

Andrew Meneely, Pete Rotella, and Laurie Williams (2011). Does adding manpower also affect quality? An empirical, longitudinal analysis. ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE).

-

André N. Meyer, Laura E. Barton, Gail C. Murphy, Thomas Zimmermann, Thomas Fritz (2017). The work life of developers: Activities, switches and perceived productivity. IEEE Transactions on Software Engineering.

-

Ben Northup (2016). Reflections of an old programmer. Software Engineering Daily Podcast.

-

Todd Sedano, Paul Ralph, Cécile Péraire (2017). Software development waste. ACM/IEEE International Conference on Software Engineering.

-

Leif Singer, Fernando Figueira Filho, and Margaret-Anne Storey (2014). Software engineering at the speed of light: how developers stay current using Twitter. ACM/IEEE International Conference on Software Engineering.

-

J. Vosburgh, B. Curtis, R. Wolverton, B. Albert, H. Malec, S. Hoben, and Y. Liu (1984). Productivity factors and programming environments. ACM/IEEE International Conference on Software Engineering.

-

Xin Xia, Lingfeng Bao, David Lo, Pavneet Singh Kochhar, Ahmed E. Hassan, Zhenchang Xing (2017). What do developers search for on the web?. Empirical Software Engineering.

Quality

There are numerous ways a software project can fail: projects can be over budget, they can ship late, they can fail to be useful, or they can simply not be useful enough. Evidence clearly shows that success is highly contextual and stakeholder-dependent: success might be financial, social, physical and even emotional, suggesting that software engineering success is a multifaceted variable that cannot be explained simply by user satisfaction, profitability or meeting requirements, budgets and schedules 6 .

One of the central reasons for this is that there are many distinct software qualities that software can have and depending on the stakeholders, each of these qualities might have more or less importance. For example, a safety critical system such as flight automation software should be reliable and defect-free, but it’s okay if it’s not particularly learnable—that’s what training is for. A video game, however, should probably be fun and learnable, but it’s fine if it ships with a few defects, as long as they don’t interfere with fun 4 .

There are a surprisingly large number of software qualities 1 . Many concern properties that are intrinsic to a software’s implementation:

- Correctness is the extent to which a program behaves according to its specification. If specifications are ambiguous, correctness is ambiguous. However, even if a specification is perfectly unambiguous, it might still fail to meet other qualities (e.g., a web site may be built as intended, but still be slow, unusable, and useless.)

- Reliability is the extent to which a program behaves the same way over time in the same operating environment. For example, if your online banking app works most of the time, but crashes sometimes, it’s not particularly reliable.

- Robustness is the extent to which a program can recover from errors or unexpected input. For example, a login form that crashes if an email is formatted improperly isn’t very robust. A login form that handles any text input is optimally robust. One can make a system more robust by breadth of errors and inputs it can handle in a reasonable way.

- Performance is the extent to which a program uses computing resources economically. Synonymous with “fast” and “zippy”. Performance is directly determined by how many instructions a program has to execute to accomplish its operations, but it is difficult to measure because operations, inputs, and the operating environment can vary widely.

- Portability is the extent to which an implementation can run on different platforms without being modified. For example, “universal” applications in the Apple ecosystem that can run on iPhones, iPads, and Mac OS without being modified or recompiled are highly portable.

- Interoperability is the extent to which a system can seamlessly interact with other systems, typically through the use of standards. For example, some software systems use entirely proprietary and secret data formats and communication protocols. These are less interoperable than systems that use industry-wide standards.

- Security is the extent to which only authorized individuals can access a software system’s data and computation.

Whereas the above qualities are concerned with how software behaves technically according to specifications, some qualities concern properties of how developers interact with code:

- Verifiability is the effort required to verify that software does what it is intended to do. For example, it is hard to verify a safety critical system without either proving it correct or testing it in a safety-critical context (which isn’t safe). Take driverless cars, for example: for Google to test their software, they’ve had to set up thousands of paid drivers to monitor and report problems on the road. In contrast, verifying that a simple static HTML web page works correctly is as simple as opening it in a browser.

- Maintainability is the effort required to correct, adapt, or perfect software. This depends mostly on how comprehensible and modular an implementation is.

- Reusability is the effort required to use a program’s components for purposes other than those for which it was originally designed. APIs are reusable by definition, whereas black box embedded software (like the software built into a car’s traction systems) is not.

Other qualities are concerned with the use of the software in the world by people:

- Learnability is the ease with which a person can learn to operate software. Learnability is multi-dimensional and can be difficult to measure, including aspects of usability, expectations of prior knowledge, reliance on conventions, error proneness, and task alignment 2 .

- User efficiency is the speed with which a person can perform tasks with a program. For example, think about the speed with which you can navigate back to the table of contents of this book. Obviously, because most software supports many tasks, user efficiency isn’t a single property of software, but one that varies depending on the task.

- Accessibility is the extent to which people with varying cognitive and motor abilities can operate the software as intended. For example, software that can only be used with a mouse is less accessible than something that can be used with a mouse, keyboard, or speech recognition. Software can be designed for all abilities, and even automatically adapted for individual abilities 7 .

- Privacy is the extent to which a system prevents access to information that intended for a particular audience or use. To achieve privacy, a system must be secure; for example, if anyone could log into your Facebook account, it would be insecure, and thus have poor privacy preservation. However, a secure system is not necessarily private: Facebook works hard on security, but shares immense amounts of private data with third parties, often without informed consent.

- Consistency is the extent to which related functionality in a system leverages the same skills, rather than requiring new skills to learn how to use. For example, in Mac OS, quitting any application requires the same action: command-Q or the Quit menu item in the application menu; this is highly consistent. Other platforms that are less consistent allow applications to have many different ways of quitting applications.

- Usability is an aggregate quality that encompasses all of the qualities above. It is used holistically to refer to all of those factors. Because it is not very precise, it is mostly useful in casual conversation about software, but not as useful in technical conversations about software quality.

- Bias is the extent to which software discriminates or excludes on the basis of some aspect of its user, either directly, or by amplifying or reinforcing discriminatory or exclusionary structures in society. For example, data used to train a classifier might used racially biased data, algorithms might use sexist assumptions about gender, web forms might systematically exclude non-Western names and language, and applications might be only accessible to people who can see or use a mouse. Inaccessibility is a form of bias.

- Usefulness is the extent to which software is of value to its various stakeholders. Utility is often the most important quality because it subsumes all of the other lower-level qualities software can have (e.g., part of what makes a messaging app useful is that it’s performant, user efficient, and reliable). That also makes it less useful as a concept, because it encompasses so many things. That said, usefulness is not always the most important quality. For example, if you can sell a product to a customer and get a one time payment of their money, it might not matter—at least to a for-profit venture—that the product has low usefulness.

Although the lists above are not complete, you might have already noticed some tradeoffs between different qualities. A secure system is necessarily going to be less learnable, because there will be more to learn to operate it. A robust system will likely be less maintainable because it it will likely have more code to account for its diverse operating environments. Because one cannot achieve all software qualities, and achieving each quality takes significant time, it is necessary to prioritize qualities for each project.

These external notions of quality are not the only qualities that matter. For example, developers often view projects as successful if they offer intrinsically rewarding work 5 . That may sound selfish, but if developers aren’t enjoying their work, they’re probably not going to achieve any of the qualities very well. Moreover, there are many organizational factors that can inhibit developers’ ability to obtain these rewards. Project complexity, internal and external dependencies that are out of a developer’s control, process barriers, budget limitations, deadlines, poor HR planning, and pressure to ship can all interfere with project success 3 .

As I’ve noted before, the person most responsible for isolating developers from these organizational problems, and most responsible for prioritizing software qualities is a product manager.

References

-

Barry W. Boehm (1976). Software engineering. IEEE Transactions on Computers.

-

Tovi Grossman, George Fitzmaurice, Ramtin Attar (2009). A survey of software learnability: metrics, methodologies and guidelines. ACM SIGCHI Conference on Human Factors in Computing (CHI).

-

Mathieu Lavallee and Pierre N. Robillard (2015). Why good developers write bad code: an observational case study of the impacts of organizational factors on software quality. ACM/IEEE International Conference on Software Engineering.

-

Emerson Murphy-Hill, Thomas Zimmermann, and Nachiappan Nagappan (2014). Cowboys, ankle sprains, and keepers of quality: how is video game development different from software development?. ACM/IEEE International Conference on Software Engineering.

-

J. Drew Procaccino, June M. Verner, Katherine M. Shelfer, David Gefen (2005). What do software practitioners really think about project success: an exploratory study. Journal of Systems and Software.

-

Paul Ralph and Paul Kelly (2014). The dimensions of software engineering success. ACM/IEEE International Conference on Software Engineering.

-

Jacob O. Wobbrock, Shaun K. Kane, Krzysztof Z. Gajos, Susumu Harada, and Jon Froehlich (2011). Ability-based design: Concept, principles and examples. ACM Transactions on Accessible Computing (TACCESS).

Requirements

Once you have a problem, a solution, and a design specification, it’s entirely reasonable to start thinking about code. What libraries should we use? What platform is best? Who will build what? After all, there’s no better way to test the feasibility of an idea than to build it, deploy it, and find out if it works. Right?

It depends. This mentality towards product design works fine if building and deploying something is cheap and getting feedback has no consequences. Simple consumer applications often benefit from this simplicity, especially early stage ones, because there’s little to lose. For example, if you are starting a company, and do not even know if there is a market opportunity yet, it may be worth quickly prototyping an idea, seeing if there’s interest, and then later thinking about how to carefully architect a product that meets that opportunity. This is how products such as Facebook started , with a poorly implemented prototype that revealed an opportunity, which was only later translated into a functional, reliable software service.

However, what if prototyping a beta isn’t cheap to build? What if your product only has one shot at adoption? What if you’re building something for a client and they want to define success? Worse yet, what if your product could kill people if it’s not built properly? Consider the U.S. HealthCare.gov launch , for example, which was lambasted for its countless defects and poor scalability at launch, only working for 1,100 simultaneous users, when 50,000 were expected and 250,000 actually arrived. To prevent disastrous launches like this, software teams have to be more careful about translating a design specification into a specific explicit set of goals that must be satisfied in order for the implementation to be complete. We call these goals requirements and we call this process requirements engineering 7 .

In principle, requirements are a relatively simple concept. They are simply statements of what must be true about a system to make the system acceptable. For example, suppose you were designing an interactive mobile game. You might want to write the requirement The frame rate must never drop below 60 frames per second. This could be important for any number of reasons: the game may rely on interactive speeds, your company’s reputation may be for high fidelity graphics, or perhaps that high frame rate is key to creating a sense of realism. Or, imagine your game company has a reputation for high performance, high fidelity graphics, high frame rate graphics, and achieving any less would erode your company’s brand. Whatever the reasons, expressing it as a requirement makes it explicit that any version of the software that doesn’t meet that requirement is unacceptable, and sets a clear goal for engineering to meet.

The general idea of writing down requirements is actually a controversial one. Why not just discover what a system needs to do incrementally, through testing, user feedback, and other methods? Some of the original arguments for writing down requirements actually acknowledged that software is necessarily built incrementally, but that it is nevertheless useful to write down requirements from the outset 6 . This is because requirements help you plan everything: what you have to build, what you have to test, and how to know when you’re done. The theory is that by defining requirements explicitly, you plan, and by planning, you save time.

Do you really have to plan by writing down requirements? For example, why not do what designers do, expressing requirements in the form of prototypes and mockups? These implicitly state requirements, because they suggest what the software is supposed to do without saying it directly. But for some types of requirements, they actually imply nothing. For example, how responsive should a web page be? A prototype doesn’t really say; an explicit requirement of an average page load time of less than 1 second is quite explicit. Requirements can therefore be thought of more like an architect’s blueprint: they provide explicit definitions and scaffolding of project success.

And yet, like design, requirements come from the world and the people in it and not from software 2 . Because they come from the world, requirements are rarely objective or unambiguous. For example, some requirements come from law, such as the European Union’s General Data Protection Regulation GDPR regulation, which specifies a set of data privacy requirements that all software systems used by EU citizens must meet. Other requirements might come from public pressure for change, as in Twitter’s decision to label particular tweets as having false information or hate speech. Therefore, the methods that people use to do requirements engineering are quite diverse. Requirements engineers may work with lawyers to interpret policy. They might work with regulators to negotiate requirements. They might also use design methods, such as user research methods and rapid prototyping to iteratively converge toward requirements 3 . Therefore, the big difference between design and requirements engineering is that requirements engineers take the process one step further than designers, enumerating in detail every property that the software must satisfy, and engaging with every source of requirements a system might need to meet, not just user needs.

There are some approaches to specifying requirements formally . These techniques allow requirements engineers to automatically identify conflicting requirements, so they don’t end up proposing a design that can’t possibly exist. Some even use systems to make requirements traceable , meaning the high level requirement can be linked directly to the code that meets that requirement 4 . All of this formality has tradeoffs: not only does it take more time to be so precise, but it can negatively affect creativity in concept generation as well 5 .

Expressing requirements in natural language can mitigate these effects, at the expense of precision. They just have to be complete , precise , non-conflicting , and verifiable . For example, consider a design for a simple to do list application. Its requirements might be something like the following:

- Users must be able to add to-do list items with a single action.

- To-do list items must contain text and a binary completed state.

- Users must be able to edit the text of to-do list items.

- Users must be able to toggle the completed state of to-do list items.

- Users must be able to delete to-do list items.

- All changes made to the state of to-do list items must be saved automatically without user intervention.

Let’s review these requirements against the criteria for good requirements that I listed above:

- Is it complete ? I can think of a few more requirements: is the list ordered? How long does state persist? Are there user accounts? Where is data stored? What does it look like? What kinds of user actions must be supported? Is delete undoable? Even just on these completeness dimension, you can see how even a very simple application can become quite complex. When you’re generating requirements, your job is to make sure you haven’t forgotten important requirements.

- Is the list precise ? Not really. When you add a to do list item, is it added at the beginning? The end? Wherever a user request it be added? How long can the to do list item text be? Clearly the requirement above is imprecise. And imprecise requirements lead to imprecise goals, which means that engineers might not meet them. Is this to do list team okay with not meeting its goals?

- Are the requirements non-conflicting ? I think they are since they all seem to be satisfiable together. But some of the missing requirements might conflict. For example, suppose we clarified the imprecise requirement about where a to do list item is added. If the requirement was that it was added to the end, is there also a requirement that the window scroll to make the newly added to do item visible? If not, would the first requirement of making it possible for users to add an item with a single action be achievable? They could add it, but they wouldn’t know they had added it because of this usability problem, so is this requirement met? This example shows that reasoning through requirements is ultimately about interpreting words, finding source of ambiguity, and trying to eliminate them with more words.

- Finally, are they verifiable ? Some more than others. For example, is there a way to guarantee that the state saves successfully all the time? That may be difficult to prove given the vast number of ways the operating environment might prevent saving, such as a failing hard drive or an interrupted internet connection. This requirement might need to be revised to allow for failures to save, which itself might have implications for other requirements in the list.

Now, the flaws above don’t make the requirements “wrong”. They just make them “less good.” The more complete, precise, non-conflicting, and testable your requirements are, the easier it is to anticipate risk, estimate work, and evaluate progress, since requirements essentially give you a to do list for implementation and testing.

Lastly, remember that requirements are translated from a design, and designs have many more qualities than just completeness, preciseness, feasibility, and verifiability. Designs must also be legal, ethical, and just. Consider, for example, the anti-Black redlining practices pervasive throughout the United States. Even through the 1980’s, it was standard practice for banks to lend to lower-income white residents, but not Black residents, even middle-income or upper-income ones. Banks in the 1980’s wrote software to automate many lending decisions; would a software requirement such as this have been legal, ethical, or just?

No loan application with an applicant self-identified as a person of color should be approved.

That requirement is both precise and verifiable. In the 1980’s, it was legal. But was it ethical or just? Absolutely not. Therefore, requirements, no matter how formally extracted from a design specification, no matter how consistent with law, and no matter how aligned with an organization’s priorities, should be free of racist ideas. Requirements are just one of many ways that such ideas are manifested, and ultimately hidden in code 1 .

References

-

Ruha Benjamin (2019). Race after technology: Abolitionist tools for the New Jim Code. Polity Books.

-

Michael Jackson (2001). Problem frames. Addison-Wesley.

-

Axel van Lamsweerde (2008). Requirements engineering: from craft to discipline. ACM SIGSOFT Foundations of Software Engineering (FSE).

-

Patrick Mäder, Alexander Egyed (2015). Do developers benefit from requirements traceability when evolving and maintaining a software system?. Empirical Software Engineering.

-

Rahul Mohanani, Paul Ralph, and Ben Shreeve (2014). Requirements fixation. ACM/IEEE International Conference on Software Engineering.

-

David L Parnas, Paul C. Clements (1986). A rational design process: How and why to fake it. IEEE Transactions on Software Engineering.

-

Ian Sommerville, Pete Sawyer (1997). Requirements engineering: a good practice guide. John Wiley & Sons, Inc.

Architecture

Once you have a sense of what your design must do (in the form of requirements or other less formal specifications), the next big problem is one of organization. How will you order all of the different data, algorithms, and control implied by your requirements? With a small program of a few hundred lines, you can get away without much organization, but as programs scale, they quickly become impossible to manage alone, let alone with multiple developers. Much of this challenge occurs because requirements change , and every time they do, code has to change to accommodate. The more code there is and the more entangled it is, the harder it is to change and more likely you are to break things.

This is where architecture comes in. Architecture is a way of organizing code, just like building architecture is a way of organizing space. The idea of software architecture has at its foundation a principle of information hiding : the less a part of a program knows about other parts of a program, the easier it is to change. The most popular information hiding strategy is encapsulation : this is the idea of designing self-contained abstractions with well-defined interfaces that separate different concerns in a program. Programming languages offer encapsulation support through things like functions and classes , which encapsulate data and functionality together. Another programming language encapsulation method is scoping , which hides variables and other names from other parts of program outside a scope. All of these strategies attempt to encourage developers to maximize information hiding and separation of concerns. If you get your encapsulation right, you should be able to easily make changes to a program’s behavior without having to change everything about its implementation.

When encapsulation strategies fail, one can end up with what some affectionately call a “ball of mud” architecture or “spaghetti code”. Ball of mud architectures have no apparent organization, which makes it difficult to comprehend how parts of its implementation interact. A more precise concept that can help explain this disorder is cross-cutting concerns , which are things like features and functionality that span multiple different components of a system, or even an entire system. There is some evidence that cross-cutting concerns can lead to difficulties in program comprehension and long-term design degradation 17 , all of which reduce productivity and increase the risk of defects. As long-lived systems get harder to change, they can take on technical debt , which is the degree to which an implementation is out of sync with a team’s understanding of what a product is intended to be. Many developers view such debt as emerging from primarily from poor architectural decisions 6 . Over time, this debt can further result in organizational challenges 9 , making change even more difficult.

The preventative solution to this problems is to try to design architecture up front, mitigating the various risks that come from cross-cutting concerns (defects, low modifiability, etc.) 7 . A popular method in the 1990’s was the Unified Modeling Language (UML), which was a series of notations for expressing the architectural design of a system before implementing it. Recent studies show that UML was generally not used and generally not universal 13 . While these formal representations have generally not been adopted, informal, natural language architectural specifications are still widely used. For example, Google engineers write design specifications to sort through ambiguities, consider alternatives, and clarify the volume of work required. A study of developers’ perceptions of the value of documentation also reinforced that many forms of documentation, including code comments, style guides, requirements specifications, installation guides, and API references, are viewed as critical, and are only viewed as less valuable because teams do not adequately maintain them 2 .

More recent developers have investigated ideas of architectural styles , which are patterns of interactions and information exchange between encapsulated components. Some common architectural styles include:

- Client/server , in which data is transacted in response to requests. This is the basis of the Internet and cloud computing 5 .

- Pipe and filter , in which data is passed from component to component, and transformed and filtered along the way. Command lines, compilers, and machine learned programs are examples of pipe and filter architectures.

- Model-view-controller (MVC) , in which data is separated from views of the data and from manipulations of data. Nearly all user interface toolkits use MVC, including popular modern frameworks such as React.

- Peer to peer (P2P) , in which components transact data through a distributed standard interface. Examples include Bitcoin, Spotify, and Gnutella.

- Event-driven , in which some components “broadcast” events and others “subscribe” to notifications of these events. Examples include most model-view-controller-based user interface frameworks, which have models broadcast change events to subscribers. For example, views may subscribe to models so they may update themselves to render new model state each time it changes.

Architectural styles come in all shapes and sizes. Some are smaller design patterns of information sharing 4 , whereas others are ubiquitous but specialized patterns such as the architectures required to support undo and cancel in user interfaces 3 .

One fundamental unit of which an architecture is composed is a component . This is basically a word that refers to any abstraction—any code, really—that attempts to encapsulate some well defined functionality or behavior separate from other functionality and behavior. For example, consider the Java class Math : it encapsulates a wide range of related mathematical functions. This class has an interface that decide how it can communicate with other components (sending arguments to a math function and getting a return value). Components can be more than classes though: they might be a data structure, a set of functions, a library, an API, or even something like a web service. All of these are abstractions that encapsulate interrelated computation and state for some well-define purpose.

The second fundamental unit of architecture is connectors . Connectors are code that transmit information between components. They’re brokers that connect components, but do not necessarily have meaningful behaviors or states of their own. Connectors can be things like function calls, web service API calls, events, requests, and so on. None of these mechanisms store state or functionality themselves; instead, they are the things that tie components functionality and state together.

Even with carefully selected architectures, systems can still be difficult to put together, leading to architectural mismatch 8 . When mismatch occurs, connecting two styles can require dramatic amounts of code to connect, imposing significant risk of defects and cost of maintenance. One common example of mismatches occurs with the ubiquitous use of database schemas with client/server web-applications. A single change in a database schema can often result in dramatic changes in an application, as every line of code that uses that part of the scheme either directly or indirectly must be updated 15 . This kind of mismatch occurs because the component that manages data (the database) and the component that renders data (the user interface) are highly “coupled” with the database schema: the user interface needs to know a lot about the data, its meaning, and its structure in order to render it meaningfully.

The most common approach to dealing with both architectural mismatch and the changing of requirements over time is refactoring , which means changing the architecture of an implementation without changing its behavior. Refactoring is something most developers do as part of changing a system 11,16 . Refactoring code to eliminate mismatch and technical debt can simplify change in the future, saving time 12 and preventing future defects 10 . However, because refactoring remains challenging, the difficulty of changing an architecture is often used as a rationale for rejecting demands for change from users. For example, Google does not allow one to change their Gmail address, which greatly harms people who have changed their name (such as this author when she came out as a trans woman), forcing them to either live with an address that includes their old name, or abandon their Google account, with no ability to transfer documents or settings. The rationale for this has nothing to do with policy and everything to do with the fact that the original architecture of Gmail treats the email address as a stable, unique identifier for an account. Changing this basic assumption throughout Gmail’s implementation would be an immense refactoring task.

Research on the actual activity of software architecture is actually somewhat sparse. One of the more recent syntheses of this work is Petre et al.’s book, Software Design Decoded 14 , which distills many of the practices and skills of software design into a set of succinct ideas. For example, the book states, “ Every design problem has multiple, if not infinite, ways of solving it. Experts strongly prefer simpler solutions over complex ones, for they know that such solutions are easier to understand and change in the future. ” And yet, in practice, studies of how projects use APIs often show that developers do the exact opposite, building projects with dependencies on large numbers of sometimes trivial APIs. Some behavior suggests that while software architects like simplicity of implementation, software developers are often choosing whatever is easiest to build, rather than whatever is least risky to maintain over time 1 .

References

-

Rabe Abdalkareem, Olivier Nourry, Sultan Wehaibi, Suhaib Mujahid, and Emad Shihab (2017). Why do developers use trivial packages? An empirical case study on npm. ACM SIGSOFT Foundations of Software Engineering (FSE).

-

Emad Aghajani, Csaba Nagy, Mario Linares-Vásquez, Laura Moreno, Gabriele Bavota, Michele Lanza, David C. Shepherd (2020). Software documentation: the practitioners' perspective. ACM/IEEE International Conference on Software Engineering.

-

Len Bass, Bonnie E. John (2003). Linking usability to software architecture patterns through general scenarios. Journal of Systems and Software.

-

Kent Beck, Ron Crocker, Gerard Meszaros, John Vlissides, James O. Coplien, Lutz Dominick, and Frances Paulisch (1996). Industrial experience with design patterns. ACM/IEEE International Conference on Software Engineering.

-

Jürgen Cito, Philipp Leitner, Thomas Fritz, and Harald C. Gall (2015). The making of cloud applications: an empirical study on software development for the cloud. ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE).

-

Neil A. Ernst, Stephany Bellomo, Ipek Ozkaya, Robert L. Nord, and Ian Gorton (2015). Measure it? Manage it? Ignore it? Software practitioners and technical debt. ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE).

-